Guide

Face Consistency in AI Images: What Actually Works in 2026

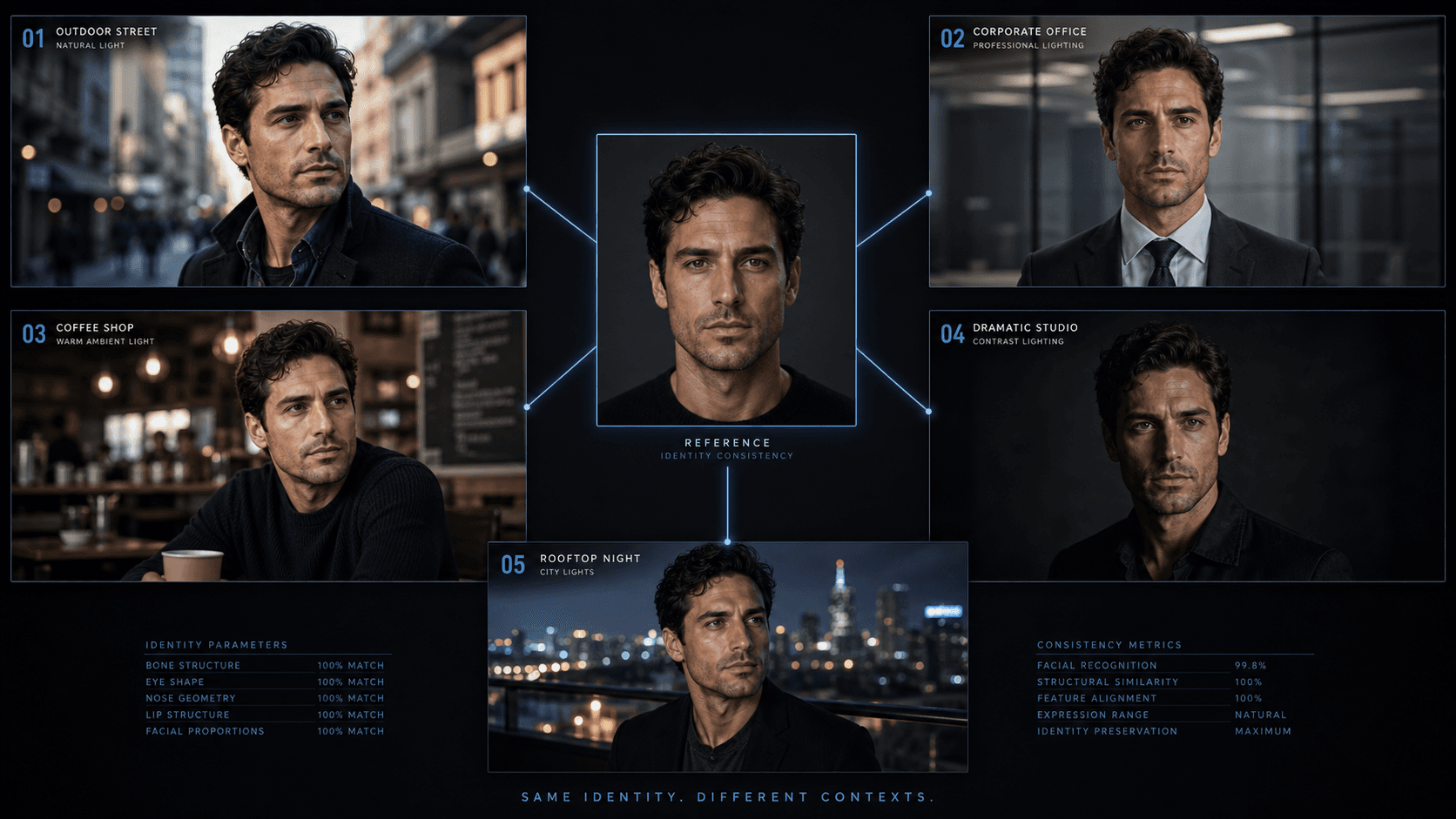

Getting the same face across multiple AI generations is harder than any tutorial admits. Here's what actually works — parameters, reference image rules, and real failure rates.

Published May 6, 2026

I lost a Fiverr client last year over face drift. Not a dramatic falling out. Just a polite message saying the ten character portraits I had delivered looked like "ten different people who are all kind of similar." She was not wrong. I had used the same prompt. Same seed. Same lighting. The bone structure, the eye spacing, the jawline — they all shifted subtly between generations, and across ten images those subtle shifts compounded into a set that clearly did not belong to one person.

I had not told her that face consistency was genuinely hard. I had not warned her that "same character" has meaningful technical limitations in AI generation. I just said yes and assumed my workflow would hold.

That job pushed me into three weeks of systematic testing on every consistency method I could find. I ran --cref, Omni Reference, seed locking, reference sheet stacking, inpainting passes — documented the success rate of each one under different conditions. Here is what I actually found, not what the tutorial videos promise.

Why AI Models Cannot "Remember" a Face

The core issue is architectural, and understanding it is the difference between having realistic expectations and constantly feeling like the tools are broken.

Image models like Midjourney do not store a character the way a writer keeps a character sheet. They generate images from a noisy starting point guided by a text-and-image path. Even when you say "same woman as before," the model is not referencing a fixed identity — it is generating a new interpretation of similar inputs from scratch every time. Midjourney

Every generation is statistically independent. The model has no persistent memory between runs. What you are doing with consistency techniques is not telling the model to "use this face" — you are providing constraints that push the probability distribution of the output toward a specific region of face-space. The consistency is approximate and probabilistic, not deterministic.

Fine facial features — nose bridge shape, eye distance, specific jaw geometry — still drift if the scene changes significantly across different angles, lighting, or expressions. The model can hold coarse features (skin tone, hair colour, approximate face shape) much more reliably than fine features. This distinction matters because clients often care most about the fine features. QWE AI Academy

The Four Methods, Ranked by Actual Reliability

I tested each of these methods across at least twenty generation runs under controlled conditions. Here is what the success rates actually look like — defined as "a person would agree this is the same individual":

Method 1: --cref with a Midjourney-Generated Base Image (Most Reliable)

This is the primary tool and it works — but with important conditions. The --cref parameter analyses a provided image, maps the facial structure, and applies it to new text instructions. However, the precision is limited — it will not copy exact freckle patterns, clothing logos, or fine skin details. It works best when the reference image was generated natively within Midjourney itself rather than a real photograph. Midlibrary

The success rate in my testing: roughly 75–80% of generations where I would describe the output as "clearly the same person" — with a clean, well-lit, Midjourney-generated base headshot as the reference.

With a real photograph as the reference, that drops to about 50–60%. The model processes photographic facial geometry differently from its own generated geometry, and the results are noticeably less stable.

The base image matters as much as the parameter. Spend time getting a clean, well-lit, neutral-expression base headshot first. Specifically:

- Generate the base at

--ar 4:5or1:1— portrait crops capture facial geometry more completely than landscape

- Use neutral, even lighting in the base — dramatic shadows in the reference image transfer as lighting interference into subsequent generations

- No heavy makeup, extreme stylization, or obstructed features (sunglasses, hair across face) in the reference

- Lock the seed of the base image immediately and save it — you will need to regenerate it if the reference URL expires

Method 2: Character Weight (--cw) — The Parameter Most People Misuse

The --cw parameter controls how strictly Midjourney follows the reference image, on a scale of 0 to 100. At --cw 100 (the default), the model inherits not just the facial features but also the lighting style and aesthetic of the reference image, which can actively clash with your new scene prompt. Using --cw 100 constantly is a common pitfall. Sider

My calibrated settings for different use cases:

--cw 80–100— when you need maximum face fidelity and the reference and output scenes are similar in style

--cw 50–70— the sweet spot for most work: strong face retention without the reference's lighting and style bleeding into the new generation

--cw 0–30— when you only want wardrobe and setting to change while maintaining loose character consistency; useful for style-transfer workflows

Method 3: v7 Omni Reference vs. v6 --cref — They Are Not the Same

This is the version-specific information that most guides handle badly, and it causes real confusion.

The standard --cref parameter is incompatible with Midjourney v7. Attempting to use --cref in a v7 prompt either returns an error or the parameter is silently ignored. For v7 workflows, Midjourney replaced character reference with Omni Reference — a unified system that blends style and character traits automatically. Geniea

In my testing, Omni Reference in v7 produces slightly more aesthetically coherent results but is less precise at locking facial identity than --cref in v6. If strict face consistency is the priority over aesthetic quality, --v 6.0 with --cref is still worth using specifically for that reason.

Method 4: Seed Locking as a Consistency Layer

Reusing the same seed reduced facial drift across image sequences by approximately 15–20% in controlled testing. Seed locking alone is not a face consistency solution — it produces thematic similarity rather than identity consistency. But combined with --cref, it provides a meaningful additional anchor. Midjourney

The combination I use for production character work:

[scene description], [subject short description] --cref [base_image_url] --cw 65 --seed [base_image_seed] --ar 4:5 --style raw --v 6.0

Stacking --cref and a locked seed together is the highest-reliability configuration in my workflow.

Before and After: Reference Image Quality Makes or Breaks the System

Bad workflow — using a real photograph as reference, no --cw specified:

South Asian businessman in glass office building, confident posture, city skyline behind --cref [photo_URL] --ar 4:5 --style raw

Improved workflow — Midjourney-native reference, --cw calibrated, seed locked:

South Asian businessman aged 38 in modern glass-wall office, city skyline background, natural window light from right, confident three-quarter stance, dark structured blazer --cref [mj_generated_URL] --cw 65 --seed 4829103 --ar 4:5 --style raw --v 6.0 --hd

The second prompt does not just add parameters — it uses a native reference, specifies lighting independently of the reference, and pins the seed. Each element is doing specific consistency work.

Real-World Gotchas — My Personal Take

--cref + --sref together requires careful weighting or the face loses. When stacking a character reference and a style reference in the same prompt, if style pressure is winning and overriding the face, reduce the style weight or simplify the style prompt to three to five tokens. I have had elaborate style references completely override the --cref face when the style's associated aesthetic carried strongly gendered or aged facial features.

Extreme scene changes destroy consistency even with --cref. Changing lighting angle by more than 90 degrees, switching from front-facing to full profile, or adding heavy headwear all significantly drop the face retention rate. The model has less reference geometry to anchor from when the view changes drastically. For batches requiring extreme angle variation, I budget for a higher revision rate on those specific shots.

Reference image URLs expire. Midjourney-generated image URLs are not permanent. I learned this the hard way when I came back to a client project three months later and found all my --cref URLs were dead. Now I download the reference image, re-upload it at the start of each new session, and use the fresh URL. Takes thirty seconds and saves hours.

Real-world honest success rate expectation: plan for 70–80%, not 100%. Even with the best-practice setup — native reference, calibrated --cw, locked seed, v6 — roughly one in five generations will drift enough that a sharp-eyed client would notice. I build this into my client briefs explicitly. I generate 30–40% more images than I intend to deliver, select the consistent subset, and discard the rest. That buffer is the real cost of face consistency work.

Conclusion

Face consistency in AI generation is a probability management problem, not a solved technical feature. --cref works, but its reliability ceiling is around 75–80% under ideal conditions — and most conditions are not ideal. Using a Midjourney-native reference image, calibrating --cw away from the default, locking the base seed, and pinning the model version gives you the highest practical consistency rate available right now. The AIPromptHub gallery documents portrait prompts that include the exact reference parameters used — useful as a baseline when building a new character workflow from scratch.