Guide

How AI Actually Reads Your Prompt: A Token-by-Token Breakdown

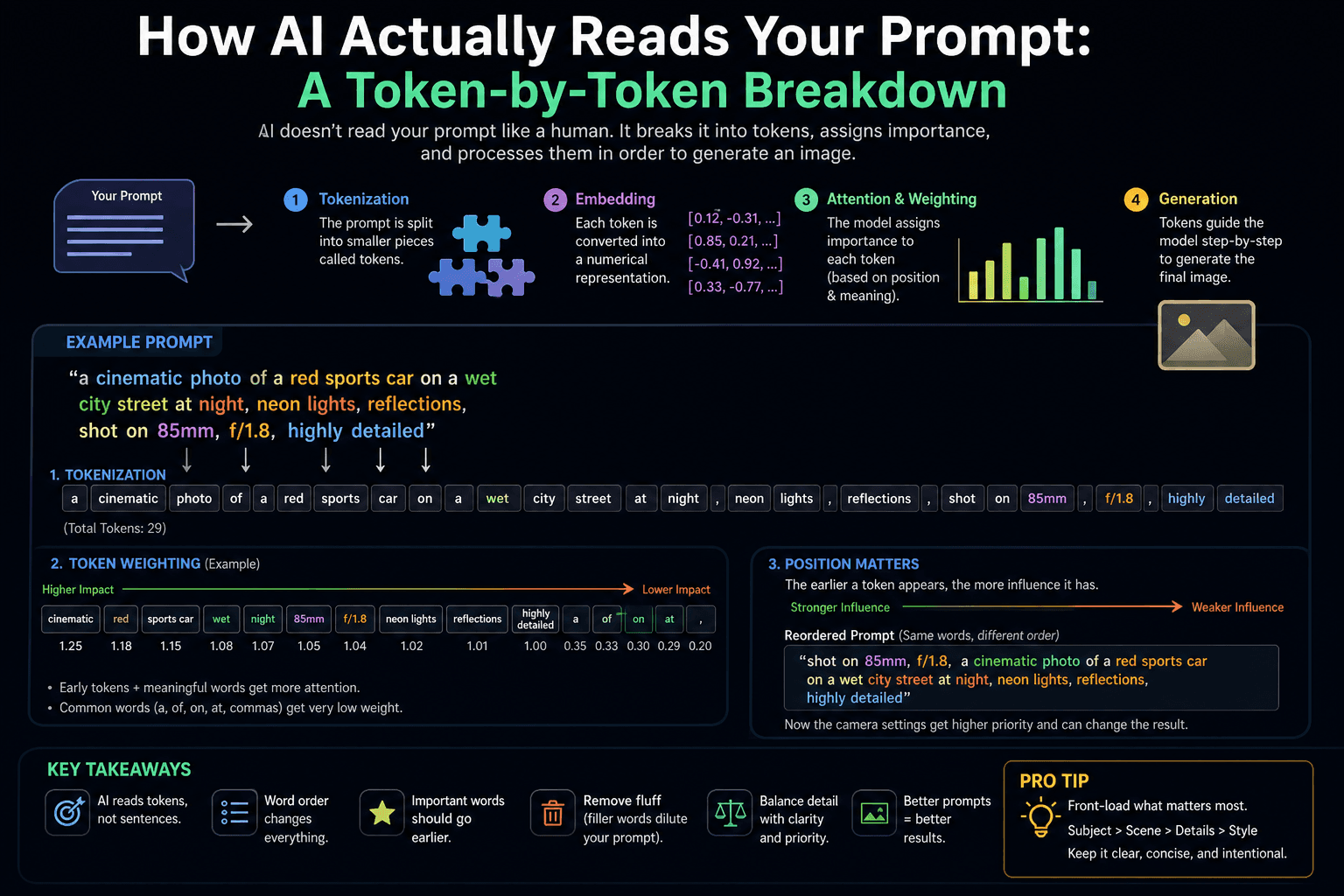

Midjourney doesn't read your prompt like a sentence — it processes weighted tokens with positional bias. Here's exactly what that means for your outputs.

Published May 4, 2026

A few months back I was building a batch of product mockups for a Fiverr client — luxury watches on dark marble surfaces. I had what felt like an airtight prompt: subject, material, lighting, composition, mood, camera specs, the works. Twenty-two words before I even got to the style parameters.

Every single generation kept pushing the marble texture to the extreme background and centering on the watch face from straight above. Not what I briefed. Not even close.

I spent an hour varying the prompt before I finally ran /shorten on it and actually looked at what Midjourney thought the high-weight tokens were. "Marble" was sitting at 0.09. It was barely registering. Meanwhile "luxury" — a vague adjective I threw in without thinking — was pulling 0.74 and steering the entire composition toward its own interpretation of "luxury watch photography."

That was the session where I stopped treating Midjourney like a search engine and started treating it like a system with a specific, mechanical reading order. Everything got better pretty quickly after that.

What "Reading a Prompt" Actually Means for a Diffusion Model

First, the model does not read your text the way you do. It does not process meaning, context, or intent in the way a human would. What it actually does is convert your text into tokens, and then use those tokens to guide a denoising process across a matrix of pixel data.

When you craft a prompt, the AI breaks it down into individual tokens — which can be phrases, words, or even syllables that the model recognises and links to specific concepts, colours, or pixel patterns. These tokens are the building blocks of the generated image. Image Work India

Midjourney doesn't see your words — it sees tokens, roughly word pieces. Each token activates a corresponding set of learned visual associations from the training data. "85mm" activates a cluster of associations built from millions of photographs taken with that focal length. "Chiaroscuro" activates a cluster built from Renaissance paintings and dramatic portrait photography. The model then tries to synthesise an image that satisfies as many of those activated associations simultaneously as possible. PromptsEra

Here's the part most guides skip: not all tokens get equal attention. Two factors determine how much influence a token actually has on your output — its position in the prompt and its inherent conceptual weight in the training data.

Positional Bias: Why Word Order Is Not Optional

All words have a default weight of 1, but words at the start of a prompt have a greater effect on the result than words at the end. Cloud Retouch

This is not a quirk. It is architecture. The attention mechanism in the underlying transformer model processes earlier tokens with higher priority during the denoising pass. By the time it gets to word 15 or 20 in your prompt, those tokens are competing for diminishing computational attention against everything that came before them.

V7 and V8 weight early tokens heavily — lead with what you actually want to see. If your primary subject is buried in the middle of a long scene description, you are actively working against the model's reading order. PromptsEra

Conceptual Weight: Why Some Words Hijack Everything

Certain tokens carry an enormous amount of pre-baked visual information from the training data. A term like "Art Nouveau," "cyberpunk," or "Rembrandt lighting" does not just contribute its literal meaning — it activates a dense cluster of stylistic associations that can pull the entire generation toward its aesthetic logic.

Midjourney achieves weighting by tokenizing its prompts. Each token activates a specific part of the model, which then guides the image generation process to produce results in that style. Highly concept-rich terms can monopolise many tokens unless an equally powerful phrase or many other words are added to counteract it. Image Work India

This is why a single strong style keyword like "oil painting" in the middle of a photorealism prompt can override your --style raw flag and render everything in thick brushstrokes. The token's conceptual gravity is stronger than the surrounding descriptors.

Prompt Dilution: Why Longer Is Not Better

As you add words to a prompt, they steal the model's attention from the other words and dilute their strength. When prompts get really long — known as "splatter-prompting" — many of the words don't even meet the threshold to be a token and get completely ignored. Image Work India

If your prompt exceeds 150 tokens, you are probably over-specifying. Cut the adjective spam. PromptsEra

The instinct to add more descriptive words when outputs are wrong is almost always counterproductive. Each new word redistributes the attention budget. Adding "extremely detailed" and "hyper-realistic" and "award-winning photography" is not stacking bonuses — it is crowding out the tokens that actually carry specific visual information.

The Practical Fix: Structuring Prompts Around Token Priority

Once you understand the mechanics, the workflow change is straightforward. Stop writing prompts as descriptions and start writing them as priority queues.

The Priority Structure I Now Use

- Primary subject (first 3–5 words) — what the image is fundamentally about

- Critical modifiers — specific attributes of the subject that cannot drift

- Environment/context — where the scene takes place

- Technical camera parameters — lens, aperture, lighting source

- Style/mood — last, because these are the most likely to hijack if placed early

Here is a before-and-after from my actual watch mockup project:

Bad prompt (weak subject positioning, style token placed too early):

luxury dark moody editorial watch photography on Italian Carrara marble surface with cinematic lighting and bokeh background, product shot, commercial quality, 8K, photorealistic --ar 16:9 --style raw

Good prompt (subject first, high-weight style tokens neutralised or repositioned):

luxury automatic watch::2 positioned on Italian Carrara marble surface::1.5, single soft overhead studio light, bokeh background, 85mm f/2.8 lens compression, neutral dark grey ambient, commercial product photography, photorealistic --ar 16:9 --style raw --hd

The :: weight syntax gives you explicit control over token priority. Word weights let you tell Midjourney which parts of the prompt matter most — add :: followed by a number after any word or phrase. Higher numbers get more visual weight. By explicitly weighting watch::2 and marble::1.5, I am overriding the positional bias that was previously burying those elements under "luxury" and "moody." Image Work India

Here is a second example — a portrait prompt where the clothing token was hijacking the face rendering:

Bad prompt (attire token pulling too much weight early):

dark ribbed turtleneck man, cinematic portrait, dramatic lighting, photorealistic, detailed face, brown skin, intense expression --ar 4:5

Good prompt (subject anatomy first, attire demoted):

close-up portrait of South Asian man, 35 years old, natural skin texture with visible pores, direct camera gaze, slight jaw tension, warm side-key light from left, dark ribbed turtleneck::0.5, neutral dark background, 85mm equivalent, photorealistic --ar 4:5 --style raw

Setting dark ribbed turtleneck::0.5 cuts its influence in half without removing it. The face now dominates the generation because the face-relevant tokens have a clear positional and numerical advantage.

Using /shorten as a Prompt X-Ray

The /shorten command shows you how strong or weak every token in your prompt is, assigning ratings from 0 to 1. This is the fastest way to identify which words are doing real work and which are just burning attention budget. Image Work India

Run /shorten on any prompt that is not behaving as expected before you start tweaking blindly. Nine times out of ten, there is a high-weight token near the middle of the prompt pulling the composition somewhere you did not intend.

Real-World Gotchas — My Personal Take

A few things that still catch me out even knowing all of this:

The :: weight syntax behaves differently across model versions. What produced a balanced composition on v7 with subject::2 sometimes over-amplifies on v8.1. I always test with ::1.5 first and adjust upward only if the token is still being ignored.

Negative weights via :: are not the same as --no. The --no command is a shortcut for assigning a weight of ::-0.5, which is a reasonably strong exclusion signal. But ::-1 is a much harder exclusion — and I have found that ::-1 on strong style tokens sometimes destabilises the entire generation rather than cleanly removing the element. Use --no for most exclusions and reserve negative :: weights for when --no is not enough. Cloud Retouch

v8.1's improved prompt adherence changes the dilution threshold. The old advice was to keep prompts under 75 words for best results. In my testing on v8.1, the model handles longer prompts with noticeably less degradation — but the positional weighting still applies. Do not use improved adherence as an excuse to stuff adjectives back in. Long precise prompts are fine. Long vague prompts still dilute badly.

Some high-weight tokens cannot be neutralised cleanly. Terms like "anime," "oil painting," or specific artist names are so heavily weighted in the training data that even setting them to ::0.5 often fails to suppress their visual influence. If you need to include them as minor references, test thoroughly. Sometimes the only fix is removing them entirely and describing the specific visual qualities you want instead.

Conclusion

The prompt is not a description — it is a weighted instruction set, and the model processes it mechanically according to position and conceptual density. Lead with your primary subject, use :: weights to override positional bias when needed, run /shorten when something feels off, and keep your token budget focused. That is most of what separates consistent outputs from unpredictable ones. The AIPromptHub gallery has tested prompts with documented outputs if you want working examples of this structure applied across different use cases — worth cross-referencing before building your own templates.